Better Predictions in Renewable Energy

- ,

- , Modelling

The Problem: Really Bad Predictions

Recently Michael Liebreich (founder of Bloomberg New Energy Finance) gave his last “State of Industry Keynote”. The link leads to a video and all the 170+ slides: well worth it!

Recently Michael Liebreich “dropped the mike” at Bloomberg New Energy Finance

He summarized it in a blog post with the title: “In Energy and Transportation, Stick it to the Orthodoxy” and followed up with a 10-point plan to fix energy (and transport) forecasting. He describes how the most authorative organizations in energy and transportation (e.g. the International Energy Agency – IEA – and Energy Information Administration – US EIA) have been demonstrable and colossally wrong in their predictions/scenarios and how we should start to believe in more daring (and hopeful) futures in energy and mobility.

Because my own visualization was first, I’ll use that to show how bad it is for solar panels:

See my blog post for a description of how this graph was made.

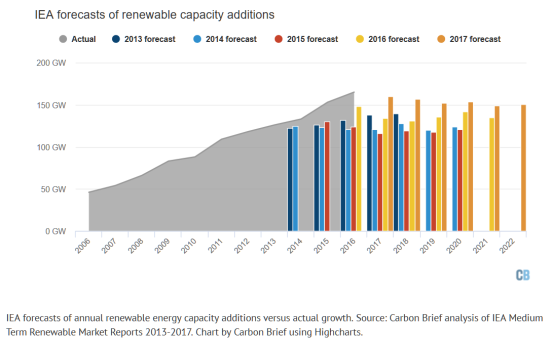

And now the IEA has produced a new forecast for 2017. Using the methodology of yearly additions I proposed, CarbonBrief shows it is basically same old, same old. They made the analysis for all renewables together (not just solar) but the picture shows the effect is the same as always:

- The starting point for the prediction has moved up (again).

This is something the IEA has no influence over: reality is asserting itself. - The prediction of annual additions stays linear and flat (again).

So the IEA again denies the exponential growth of renewables.

By the way: the IEA is the 900 pound gorilla in this field but the EIA (the US counterpart of the IEA) is just as bad and most other organizations have also severely underestimated the growth of solar.

This is a real problem because it discourages renewable investments and robs us of hope when reality in fact shows us that the investments are working and there is good reason for hope. What explains this negative outlook?

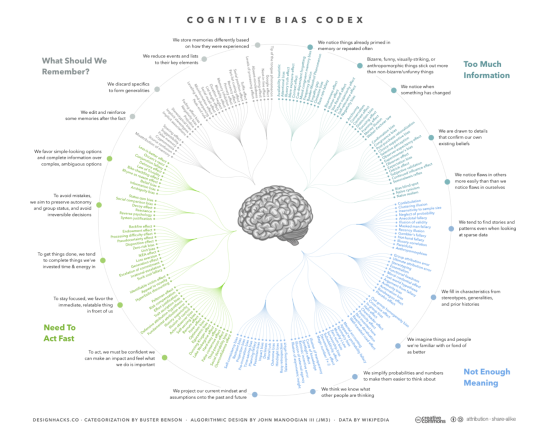

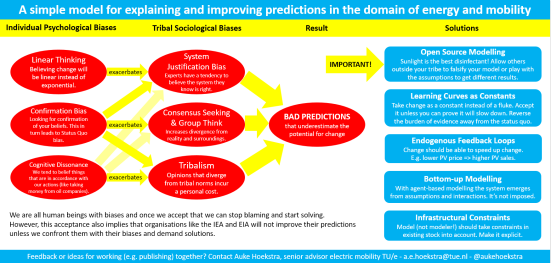

Explanation (1): We are Human with Lots of Biases

All humans (including me!) have biases and they mostly favor the status quo. That is especially true for experts. In order to counter that we should rely more on trends and less on the status quo.

Darwin was ridiculed when he first proposed the theory of evolution

Before we can begin to make predictions we should be able to take a hard look at ourselves and the way we naturally make decisions. Ever since Darwin published “Descent of Man”, humanity has been coming to terms with the fact that we are just another animal.

We might have better logical reasoning than animals and we seem to be the only one using advanced technology but our desires and decisions are still driven largely by our lizard brain. We are experts at rationalizingour decisions after the fact but our emotions are often a more powerful determinant. So if we are honest we must acknowledge that we are subject to a whole range of cognitive biases that skew our ability to make good predictions.

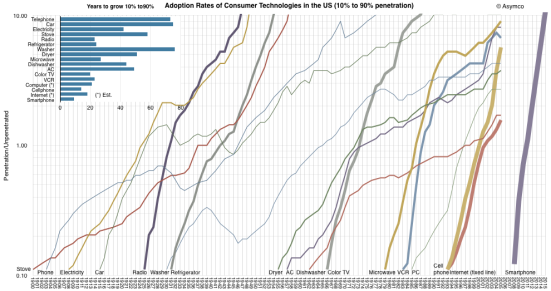

One bias that has been widely noted is that humans are linear thinkers in a non-linear world. I think Ray Kurzweil of the Singularity University (of which Eindhoven University of Technology is a partner) has done more than anybody to bring this awareness into our consciousness.

Another very strong bias is confirmation bias [1] which makes us select facts that fit the worldview we already posses.

The sad fact is that when it comes to radical innovation, experts are even less reliable than the average person. Experts are almost by definition focused on the past (because that is where they get their expertise from) and this makes them prone to status quo bias [2] and system justification bias [3]. The result is famously formulated by Arthur C. Clarke in his first law:

When a distinguished but elderly scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong.

Finally there is the often misunderstood phenomenon of cognitive dissonance. In “An Inconvenient Truth”, Al Gore formulated the influence of this phenomena on certain forecasters and politicians as:

It’s hard to convince a person if his paycheck depends on not understanding you.

(The quote is originally from Upton Sinclair)

Cognitive dissonance means it’s uncomfortable to hold beliefs that contradict your actions. In a Hollywood movie that means your change your actions but in reality we usually solve this discomfort by changing our beliefs. And very few people are aware of this. So when you have an organization like the IEA that gets its money through consensus and from the status quo, cognitive dissonance theory predicts that IEA members will (unconsciously) embrace beliefs that are in line with the status quo.

Does this mean we should ignore experts? Of course not! First of all, they only have a larger than usual bias in cases of radical innovation where change is discontinuous. Secondly they have vast knowledge that should not go to waste because of a few biases. We just have to neutralize the biases.

What we could do is embrace the methods put forward by people with a better track record in predicting radical innovation. I’ll explain how to do that when I come to solutions but first I must describe how biases are made worse by our tribes.

Explanation (2): Our Tribes make our Biases Worse

Humans are tribal by nature and our tribal affiliations make us disregard certain scenarios. Three examples:

- Global warming

More than 95-98% of scientists accept that we are in an age of man-made global warming. But in US politics, denying it has become part of the Republican tribal identity with only 24% accepting it, versus 80% of democrats. Another example: if you are a US citizen, you will probably interpret the phrase “America first” different than people in e.g. The Netherlands. - Religion

If you are religious, chances are you have the same religion as your parents and think that other religions have a weaker grasp of the truth. However, people in other religions think so too. If I would use the scientific method to analyze this problem I would say the simplest explanation that makes sense for all religions is that religious teachings are not “true” in the scientific sense but rather a set of values and norms that we learn from the tribe we grow up in. - Empathy for animals

If we ever reach the age of empathy and become an empathic civilization, I think it’s plausible that we will one day extend our empathy to more than just humans. Once it was considered uncivil to discuss the idea that slaves or women had rights. Now the opposite is true. My personal estimate is that there will come a time when we will treat the suffering of pets and pigs with equal compassion and consider industrial farming one of the worst crimes in history.

A visualization of how the Overton Window limits discussion to what is acceptable

The point I’m making is that the tribe we live in, limits the window of discourse we can have. In his keynote and blog post, Liebreich introduces this phenomena as the “Overton window” (see illustration) and poses that this makes it difficult to make good predictions in energy and mobility.

This is also true for tribes of experts. We already mentioned linear thinking, confirmation bias, status quo bias and system justification bias but all these are exacerbated by the groupthink, information bias and consensus seekingthat occur when experts have to work in groups [4]. When that happens it is a safe bet that the resulting predictions will be even more orthodox, than the ones the experts would make on their own.

So it is actually not hard to explain why groups of experts are consistently much too conservative and orthodox and how they have a blind spot for radical innovations. The good news is that it’s also not very hard to explain what we have to do about it. Let’s turn to solutions!

The Solution: How to make Better Predictions of Radical Innovation

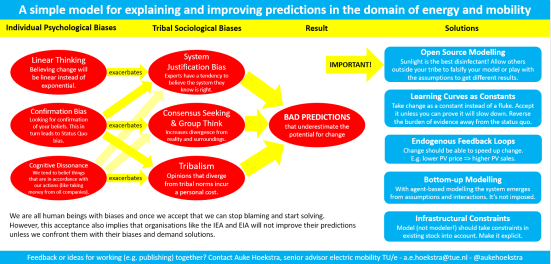

I propose five criteria that I’ve visualized in the picture you saw in the introduction:

If you have read so far I thank you dear reader. But fair warning: the rest of this post is meant for people who work with energy models professionally. Others might find it too detailed but I wanted to put it out there.

1) Open Source Modelling: the Scientific Method for the 21st Century

Sunlight is the best disinfectant. Allow others outside your tribe to falsify your model or play with the assumptions to get different results. Without this, bias can go unchecked.

The scientific method might have some antidotes against the discussed biases because it tries to be independent (as much as possible) from the scientist making the prediction. To quote Richard Feynman:

The first principle [of science] is that you must not fool yourself

and you are the easiest person to fool.

In order to avoid fooling him or herself, a scientist is required to open up himself to criticism as much as possible. Science is based on falsifiability: your findings are only taken seriously if they can be disproven. When you make your claims easier to disprove, you are taken more seriously. Or more popularly formulated: “In God we trust, all others bring data.”

When J.B.S. Haldane was asked how evolution theory could be falsified he famously answered: “Fossil rabbits in the Precambrian”: anybody digging through rocks finds rabbit bones everywhere, but if they where found in very old rocks – from before there where supposed to be mammals – this would immediately turn the theory of evolution on its head. (In case you’re wondering: nope.)

Now for obvious reasons, predictions about the future are harder to disprove than predictions about the past. But what we can do is maximize transparency so that each and every piece of data, every equation, every step leading up to your prediction can be scrutinized by others.

One way to implement this is through peer review. Peer reviewing means that before publication, your article is read and critiqued in detail by other scientists that are knowledgeable in your area. It is only printed once you have addressed al criticism. However, you might understand how peer review could also introduce the type of bias towards orthodoxy that we discussed previously. It is well known that professors can sometimes stymie change until they retire. For this reason science is sometimes said to progress one funeral at a time.

But we don’t need to succumb to orthodoxy if we want to keep the most important part of peer review. The essence of peer review is falsifiability: opening yourself up to detailed criticism by other knowledgeable individuals. For radical innovation these knowledgeable individuals should include voices from outside the orthodoxy. E.g. students, scientists (from areas where the Overton window is different) and investigative journalists.

The solution seems obvious to me. Your raw data, your assumptions and your model should be publicly available. Preferably in an easy to download format on the web. E.g. a github repository for code and a csv file for data.

In this respect the current state of energy predictions is miserable. Models like the WEM (World Economic Model) of the IEA (International Energy Agency) are not publicly available, let alone easy to download in the form of a github repository. And this also holds true for most other models. One reason is that experts don’t like to make themselves vulnerable unless they are forced to. An even more important reason that these predictions are increasingly intellectual property of consultancy firms that make money from using a black box. The result is that anybody can choose the black box that delivers the results he or she likes and our problems are worse than ever.

If we are even halfway serious about addressing the aforementioned biases, this secrecy is anathema.

The first litmus test: “Are model and data downloadable

so anybody can verify and reproduce the results?”

If the answer is “no” I propose we exclude the predictions of the model as the basis for serious decision making. And since the IEA word economic model is closed I propose we open it up.

And the first thing I would do if I got my hands on it would be to see if I could make it reproduce past developments in renewable energy more accurately. I expect it will not able to match past developments which would basically make it a falsified model.

2) Learning Curves as Constants

Take change as a constant instead of a fluke. Accept it unless you can prove it will slow down. Reverse the burden of evidence away from the status quo.

I already mentioned Ray Kurzweil. What he discovered is that often he had ideas for inventions that where ahead of their time: synthesizers, optical character recognition, speech recognition, artificial intelligence, etc. They relied on technology that was not there yet. So he thought: if I could predict how fast supporting technologies like computers will progress I can estimate when my invention should be ready for market. He started doing that with a lot of success and it is now used by institutes like the Singularity University. I propose we use that approach in renewable energy too.

If you simplify the approach to the utmost essential, you look at how a technology progresses over time and you extrapolate the past into the future. This is commonly called the learning curve approach. This goes further than how economists have used learning curves in the past. In the past it was used narrowly for production processes in a factory that showed a tendency to lower costs with increasing economies of scale. The approach we are talking about here goes much further. It assumes that learning curves about technologies can hold over the total of human efforts. So this includes not only factory floors but also university researchers, venture capitalists and inventors. One of the examples that still blows me away is that Moore’s Law actually holds over multiple technologies.

Moore’s law is not only true for ICs but also for information technologies that came before it.

If this trend holds (and there is every indication it is doing just that), the computing that $1000 can buy will increase from the raw brain power of a not so smart mouse in 2017 to the brain power of all human brains put together in 2050. Now a machine with loads of brain power is not automatically smart, but when combined with advances in visual systems and pattern recognition I find it hard to tell people with a straight face that we will be driving cars ourselves in the future.

Solar panels are an equivalent development in the renewable energy domain:

And actually this is true for most energy technologies as the next picture of the European Commission shows (picture is outdated – from 2003 – but gives a nice overview):

If you see this you think everything is getting cheaper rapidly. But there are two reasons renewables benefit from this learning much more than fossil fuels:

- Renewables don’t use fuel. So once you’ve build your solar or wind farm, the energy is essentially free. With a gas fired power plant your fuel is actually much costlier than building your powerplant (over the lifetime of the plant) so the learning curve is of limited influence.

- Renewables are still small. The curves in the picture show that increasing the volume decreases the price. But if you look at mature technologies that hardly grow anymore, the effect of learning is minimal. But with e.g. solar, off-shore wind and batteries there is an enormous amount of price reducing learning in store.

So learning curves is what enables renewable generation to blow fossil fuels right out of the water. But what about storage for when the wind doesn’t blow and the sun doesn’t shine? For those cases there’s batteries and here a new graph from Schmidt et al in Nature that is illuminating:

So in terms of storage that enables solar and wind to produce base load and that makes electric vehicles cheaper learning curves are equally or more aggressive.

Now this does not end understanding. E.g. I do a lot of research into the raw materials that go into lithium batteries and electric drivetrains so I can double check the learning curves. And I often end up making them a little less aggressive in the long term just to be sure. But they are still my starting point. Ignoring them would be taking the whole shebang in biases on board. So:

The second litmus test: “Are all the relevant technologies modelled as individual learning curves and are their formulas and influence verifiable?”

Now I don’t know how well the IEA model scores here (since it’s secret) but indirectly I’ve heard it whispered that in the WEM, the learning curves of production are modeled but storage is very expensive, which impedes solar and wind. My guess is that demand-response, short term storage (batteries) and long term storage (e.g. electrolysis and fuel cells) have been modeled inadequately.

3) Endogenous Feedback Loops

Change should be able to speed up change. E.g. lower PV price => higher PV sales.

When I started into this field ten years or so ago I came upon an article using learning curves and feedback loops for solar energy. I noticed that the growth per year in the article was never more than 27%. So I called the researcher and asked: “Why?” He basically told me that going any higher would make the article an object of ridicule. But that’s not a good reason! That’s a whole list of aforementioned biases at work! Since then the PV market has grown with on average 43% so the overall growth has been several times higher than the article predicted. That also means more price reduction (using the learning curve) so that it’s now less than half of what the article predicted. And this article was so positive that it was considered almost unserious…

The next graph neatly visualizes that change can occur relatively rapidly:

And multiple applications have noted that endogenous feedback loops lead to a more renewable friendly response. A review of 25 well known models concludes that the impact is significant [5]. So:

The third litmus test: “Are the important learning curves and adoption rates connected through feedback loops?”

4) Bottom-up modelling

With agent-based modelling the system emerges from assumptions and interactions. It’s not imposed. Unfortunately almost all models are still equilibrium models that produce unrealistic results, especially when the topic is radical innovation.

Making an open source model using learning curves is relatively easy and will overcome most biases. Adding feedback loops is somewhat more complex and requires some macros in excel or good system dynamics software. But if you really want to model radical change you have to take the next step and that means making full use of computers.

Let’s start with how NOT to do it. Economics has a track record to rival that of the IEA on solar panels. In my humble opinion, people outside of economics would be horrified if they knew how unrealistic most economic models are. The dominant models are called equilibrium models (or CGE for Computed Generalized Equilibrium or DSGE for Dynamic Stochastic General Equilibrium). Fagiolo et al [6] document how these DSGE models became the consensus in 2008. Economists triumphantly exclaimed that monetary policy was finally becoming science. And then, a few months later, the financial crisis unexpectedly hit [7]–[9].

Equilibrium models are based on “the holy trinity of rationality, selfishness, and equilibrium” [10]. They assume “rational” actors that can be represented by a couple of “representative” aggregated agents[6]. Such “rational” actors only strive for utility maximization. They immediately know the utility of every product and price on the market. They are impervious to status, strategizing, populism, idealism, tribalism, hearsay or marketing. They don’t empathize with future generations because the discount rate tells that a life in 2100 is worth only worth 1/100th of life today. They cannot use their wealth to help people in other countries because Negishi welfare weights forbid it. Furthermore the actors function in “ideal” markets. This means, among other things, no bankers with perverse incentives, no lobbyists, no political games, no idealists, no monopolistic tendencies, no network effects, no incumbent resistance to change, et cetera.

If you think this sounds completely unrealistic you are in good company: most psychologists, sociologists, political scientists, marketers and entrepreneurs agree with you [11]. They accuse economists of “a steadfast refusal to face facts” [12]. It’s all due to a well-meant attempt to capture our civilization in a few mathematical equations. But it’s not working. To quote Ackerman [13]: “The mathematical dead end reached by general equilibrium analysis is not due to obscure or esoteric aspects of the model, but rather arises from intentional design features … Modification of economic theory … will require a new model of consumer choice, nonlinear analyses of social interactions, and recognition of the central role of institutional and social constraints”. Nobel laureate Paul Krugman adds [14]: “As I see it, the economics profession went astray because economists, as a group, mistook beauty, clad in impressive-looking mathematics, for truth. … Economists need to abandon the neat but wrong solution of assuming that everyone is rational and markets work perfectly. The vision that emerges as the profession rethinks its foundations may not be all that clear; it certainly won’t be neat; but we can hope that it will have the virtue of being at least partly right.”

If you think this had nothing to do with energy I have more bad news for you. Currently most energy models are largely equilibrium models. E.g. the World Energy Model (used by the IEA for its influential “Energy Outlook” series), POLES (used by Enerdata) and PRIMES (used by the European Commission) [15]. Stanton et al [16] also review the following integrated assessment models using CGE: JAM; IGEM; IGSM/EPPA (MIT); SMG; WORLDSCAN (CPB); ABARE-GTEM; G-CUBED; MS-MRT; AIM; IMACLIM-R; WIAGEM; MiniCAM and GIM.

But there is hope! From biology and ecology we have learned how real world systems like our society work. We call them complex adaptive systems now. The bad news is that such systems are confusing to our primate brain. (Due to non-linearity, feedback, time delays and interdependencies [18]. We see that in empirical studies [19]–[23].) The good news is that we can understand them with the help of a computer if we use agent-based models (ABM) [17], [24], [25]. Some are so enthusiastic that they call it “a third way of doing science” and I agree [26], [27].

Three simulation methods that improve equilibrium models [17]

ABM is a young discipline with the first computer models in the 80’s and 90’s but ABM is now a fast growing field [28], [29] and the preferred modelling method complex adaptive socio-technical systems [30], [31]. It’s widely used in health [32], organizational science [33]; emergency response [34]; land use [35], [36]; manufacturing [37]; ecosystem management [38]; and marketing [39], [40]. In economics there is now a 900 page handbook of agent-based computational economics [41] (although most students are still taught the old equilibrium models).

Energy systems are ideal for ABM [42]–[45]. Very active fields are smart grids [46]–[48], electricity markets [49]–[51], distributed generation [52] and transportation [53]–[56]. And if you look at models that aim to manage the transition to renewable energy, half is already an ABM [57].

The fourth litmus test: does the model use bottom-up agent-based modelling?

5) Realistic Infrastructure

Our behavior is also determined by our environment. A model should contain buildings, grids etc.

Let’s take electric vehicle adoption as an example. Batteries need to be cheap enough before people will buy electric vehicles, that is obvious and addressed by requirements 2 (use learning curves) and 3 (endogenous feedback loops). Maybe you even model people that buy electric vehicles, thereby addressing my requirement 4 (bottom-up agent-based modelling).

But even then there will be barriers to adoption. If we take the case of solar panels you must have a roof to put them on if you want to buy them for your house. So space is an important requirement. If your neighbor already has them, you might speak to him or her about it which increases your chance to buy them so your environment is important. And if a country gets a lot of solar panels is might have to think of how to deal with peaks on the electricity grid. So the grid is also important.

I think the best way to deal with this is to model the geographic infrastructure explicitly. It’s often called GIS data for geographical information system. Fortunately more and more of this type of information is available as open source data. E.g. Google maps uses such data. The advantage of using GIS data is not only that is forces you to deal with limitations in infrastructure. It also enables coupling different systems based on their location. You can download it and use it in your agent-based model using state-of-the-art tools like GAMA.

Example of a neighborhood with different layers in one of my models

Now I’m not claiming that every good model should use GIS data. But it really helps you to integrate different subsystems based on their spatial coordinates. Furthermore, using real world data like this forces you to deal with reality instead of preconceived notions and thus really helps to overcome biases: it’s simply very hard to model your biases into such a model.

Fifth litmus test: does the model use realistic spatial relationships and infrastructural constraints.

Wow, you really made it till here? Congratulations! I would love to hear your critique or feedback. And I hope there are some people that will in some ways improve an energy model because he or she read this. Here’s to hoping!

References

[1] R. Nickerson, “Confirmation bias: a ubiquitous phenomenon in many guises,” Rev. Gen. Psychol., vol. 2, no. 2, pp. 175–220, 1998.

[2] W. Samuelson and R. Zeckhauser, “Status quo bias in decision making,” J. Risk Uncertain., vol. 1, no. 1, pp. 7–59, Mar. 1988.

[3] J. T. Jost, M. R. Banaji, and B. A. Nosek, “A Decade of System Justification Theory: Accumulated Evidence of Conscious and Unconscious Bolstering of the Status Quo,” Polit. Psychol., vol. 25, no. 6, pp. 881–919, Dec. 2004.

[4] T. Postmes, R. Spears, and S. Cihangir, “Quality of decision making and group norms.,” J. Pers. Soc. Psychol., vol. 80, no. 6, pp. 918–930, 2001.

[5] K. Gillingham, R. G. Newell, and W. A. Pizer, “Modeling endogenous technological change for climate policy analysis,” Energy Econ., vol. 30, no. 6, pp. 2734–2753, Nov. 2008.

[6] G. Fagiolo and A. Roventini, “Macroeconomic policy in dsge and agent-based models redux: New developments and challenges ahead,” 2016.

[7] F. S. Mishkin, “Will Monetary Policy Become More of a Science?,” in The Science and Practice of Monetary Policy Today, Springer, Berlin, Heidelberg, 2010, pp. 81–103.

[8] M. Goodfriend, “How the World Achieved Consensus on Monetary Policy,” J. Econ. Perspect., vol. 21, no. 4, pp. 47–68, 2007.

[9] J. Galí and M. Gertler, “Macroeconomic modeling for monetary policy evaluation,” J. Econ. Perspect., vol. 21, no. 4, pp. 25–45, 2007.

[10] D. Colander, R. Holt, and B. R. Jr, “The changing face of mainstream economics,” Rev. Polit. Econ., vol. 16, no. 4, pp. 485–499, Oct. 2004.

[11] V. Mosini, Equilibrium in Economics: Scope and Limits. Routledge, 2008.

[12] C. A. E. Goodhart, “The Continuing Muddles of Monetary Theory: A Steadfast Refusal to Face Facts,” Economica, vol. 76, no. s1, pp. 821–830, 2009.

[13] F. Ackerman, “Still dead after all these years: interpreting the failure of general equilibrium theory,” J. Econ. Methodol., vol. 9, no. 2, pp. 119–139, 2002.

[14] P. Krugman, “How Did Economists Get It So Wrong?,” The New York Times, 02-Sep-2009.

[15] A. Herbst, F. Toro, F. Reitze, and E. Jochem, “Introduction to energy systems modelling,” Swiss J. Econ. Stat., vol. 148, no. 2, pp. 111–135, 2012.

[16] E. A. Stanton, F. Ackerman, and S. Kartha, “Inside the integrated assessment models: Four issues in climate economics,” Clim. Dev., vol. 1, no. 2, p. 166, Jul. 2009.

[17] A. Borshchev, The Big Book of Simulation Modeling: Multimethod Modeling with Anylogic 6. Lisle, IL: AnyLogic North America, 2013.

[18] J. D. Sterman, “Learning in and about complex systems,” Syst. Dyn. Rev., vol. 10, no. 2–3, pp. 291–330, Jun. 1994.

[19] P. W. B. Atkins, R. E. Wood, and P. J. Rutgers, “The effects of feedback format on dynamic decision making,” Organ. Behav. Hum. Decis. Process., vol. 88, no. 2, pp. 587–604, Jul. 2002.

[20] B. Brehmer, “Dynamic decision making: Human control of complex systems,” Acta Psychol. (Amst.), vol. 81, no. 3, pp. 211–241, Dec. 1992.

[21] E. Diehl and J. D. Sterman, “Effects of Feedback Complexity on Dynamic Decision Making,” Organ. Behav. Hum. Decis. Process., vol. 62, no. 2, pp. 198–215, May 1995.

[22] D. N. Kleinmuntz, “Information processing and misperceptions of the implications of feedback in dynamic decision making,” Syst. Dyn. Rev., vol. 9, no. 3, pp. 223–237, Sep. 1993.

[23] J. D. Sterman, “Modeling Managerial Behavior: Misperceptions of Feedback in a Dynamic Decision Making Experiment,” Manag. Sci., vol. 35, no. 3, pp. 321–339, Mar. 1989.

[24] M. A. Bedau, “Weak Emergence,” Noûs, vol. 31, pp. 375–399, Jan. 1997.

[25] J. Epstein and R. Axtell, Growing Artificial Societies: Social Science From the Bottom Up (Complex Adaptive Systems). Brookings Institution Press MIT Press, 1996.

[26] R. Axelrod, “Advancing the Art of Simulation in the Social Sciences,” in Simulating Social Phenomena, D. R. Conte, P. D. R. Hegselmann, and P. D. P. Terna, Eds. Springer Berlin Heidelberg, 1997, pp. 21–40.

[27] J. Epstein, “Agent-Based Computational Models And Generative Social Science,” Gener. Soc. Sci. Stud. Agent-Based Comput. Model., p. 4, 2006.

[28] E. Bonabeau, “Agent-based modeling: Methods and techniques for simulating human systems,” Proc. Natl. Acad. Sci., vol. 99, no. suppl 3, pp. 7280–7287, May 2002.

[29] S. de Marchi and S. E. Page, “Agent-Based Models,” Annu. Rev. Polit. Sci., vol. 17, no. 1, pp. 1–20, 2014.

[30] K. H. Van Dam, “Capturing socio-technical systems with agent-based modelling,” PhD Thesis, TU Delft, Delft University of Technology, 2009.

[31] K. H. van Dam, I. Nikolic, and Z. Lukszo, Agent-Based Modelling of Socio-Technical Systems. Springer Publishing Company, Incorporated, 2014.

[32] R. Hilscher, “Review of A Spatial Agent-Based Simulation Modeling in Public Health: Design, Implementation, and Applications for Malaria Epidemiology (Wiley Series in Modeling and Simulation),” 13-Jan-2017. [Online]. Available: http://jasss.soc.surrey.ac.uk/20/1/reviews/2.html. [Accessed: 01-Apr-2017].

[33] G. Fioretti, “Agent-Based Simulation Models in Organization Science,” Organ. Res. Methods, vol. 16, no. 2, pp. 227–242, Apr. 2013.

[34] G. I. Hawe, G. Coates, D. T. Wilson, and R. S. Crouch, “Agent-based simulation for large-scale emergency response: A survey of usage and implementation,” ACM Comput. Surv., vol. 45, no. 1, pp. 1–51, Nov. 2012.

[35] R. B. Matthews, N. G. Gilbert, A. Roach, J. G. Polhill, and N. M. Gotts, “Agent-based land-use models: a review of applications,” Landsc. Ecol., vol. 22, no. 10, pp. 1447–1459, Dec. 2007.

[36] D. C. Parker, S. M. Manson, M. A. Janssen, M. J. Hoffmann, and P. Deadman, “Multi-Agent Systems for the Simulation of Land-Use and Land-Cover Change: A Review,” Ann. Assoc. Am. Geogr., vol. 93, no. 2, pp. 314–337, Jun. 2003.

[37] W. Shen, Q. Hao, H. J. Yoon, and D. H. Norrie, “Applications of agent-based systems in intelligent manufacturing: An updated review,” Adv. Eng. Inform., vol. 20, no. 4, pp. 415–431, Oct. 2006.

[38] F. Bousquet and C. Le Page, “Multi-agent simulations and ecosystem management: a review,” Ecol. Model., vol. 176, no. 3–4, pp. 313–332, Sep. 2004.

[39] G. P. Richardson and P. Otto, “Applications of system dynamics in marketing: Editorial,” J. Bus. Res., vol. 61, no. 11, pp. 1099–1101, Nov. 2008.

[40] A. Negahban and L. Yilmaz, “Agent-based simulation applications in marketing research: an integrated review,” J. Simul., vol. 8, no. 2, pp. 129–142, May 2014.

[41] L. Tesfatsion and K. L. Judd, Eds., Handbook of computational economics volume 2: Agent-based computational economics. Amsterdam Boston: Elsevier, 2006.

[42] C. S. E. Bale, L. Varga, and T. J. Foxon, “Energy and complexity: New ways forward,” Appl. Energy, vol. 138, pp. 150–159, Jan. 2015.

[43] T. Ma and Y. Nakamori, “Modeling technological change in energy systems – From optimization to agent-based modeling,” Energy, vol. 34, no. 7, pp. 873–879, 2009.

[44] E. j. l. Chappin and G. p. j. Dijkema, “Agent-based modelling of energy infrastructure transitions,” Int. J. Crit. Infrastruct., vol. 6, no. 2, pp. 106–130, Jan. 2010.

[45] V. Rai and A. D. Henry, “Agent-based modelling of consumer energy choices,” Nat. Clim. Change, vol. 6, no. 6, pp. 556–562, Jun. 2016.

[46] P. Ringler, D. Keles, and W. Fichtner, “Agent-based modelling and simulation of smart electricity grids and markets – A literature review,” Renew. Sustain. Energy Rev., vol. 57, pp. 205–215, May 2016.

[47] R. Roche, B. Blunier, A. Miraoui, V. Hilaire, and A. Koukam, “Multi-agent systems for grid energy management: A short review,” in IECON 2010 – 36th Annual Conference on IEEE Industrial Electronics Society, 2010, pp. 3341–3346.

[48] P. Vrba et al., “A Review of Agent and Service-Oriented Concepts Applied to Intelligent Energy Systems,” IEEE Trans. Ind. Inform., vol. 10, no. 3, pp. 1890–1903, Aug. 2014.

[49] F. Sensfuß, M. Ragwitz, M. Genoese, and D. Möst, “Agent-based simulation of electricity markets: a literature review,” Working paper sustainability and innovation, 2007.

[50] R. Marks, “Chapter 27 Market Design Using Agent-Based Models,” in Handbook of Computational Economics, vol. 2, L. T. and K. L. Judd, Ed. Elsevier, 2006, pp. 1339–1380.

[51] J. Babic and V. Podobnik, “A review of agent-based modelling of electricity markets in future energy eco-systems,” in 2016 International Multidisciplinary Conference on Computer and Energy Science (SpliTech), 2016, pp. 1–9.

[52] J. G. Veneman, M. A. Oey, L. J. Kortmann, F. M. Brazier, and L. J. de Vries, “A Review of Agent-based Models for Forecasting the Deployment of Distributed Generation in Energy Systems,” in Proceedings of the 2011 Grand Challenges on Modeling and Simulation Conference, Vista, CA, 2011, pp. 16–21.

[53] B. Chen and H. H. Cheng, “A Review of the Applications of Agent Technology in Traffic and Transportation Systems,” IEEE Trans. Intell. Transp. Syst., vol. 11, no. 2, pp. 485–497, Jun. 2010.

[54] A. L. C. Bazzan and F. Klügl, “A review on agent-based technology for traffic and transportation,” Knowl. Eng. Rev., vol. 29, no. 03, pp. 375–403, Jun. 2014.

[55] US Department of Transportation, “A Primer for Agent-Based Simulation and Modeling in Transportation Applications.” [Online]. Available: https://www.fhwa.dot.gov/advancedresearch/pubs/13054/13054.pdf. [Accessed: 13-Apr-2016].

[56] N. Ronald, R. Thompson, and S. Winter, “Simulating Demand-responsive Transportation: A Review of Agent-based Approaches,” Transp. Rev., vol. 35, no. 4, pp. 404–421, Jul. 2015.

[57] F. G. N. Li, E. Trutnevyte, and N. Strachan, “A review of socio-technical energy transition (STET) models,” Technol. Forecast. Soc. Change, vol. 100, pp. 290–305, Nov. 2015.